GPU Server

Dedicated NVIDIA GPU power for AI, machine learning, and rendering — server location Germany, 100% carbon-neutral electricity.

RTX 4000 SFF Ada

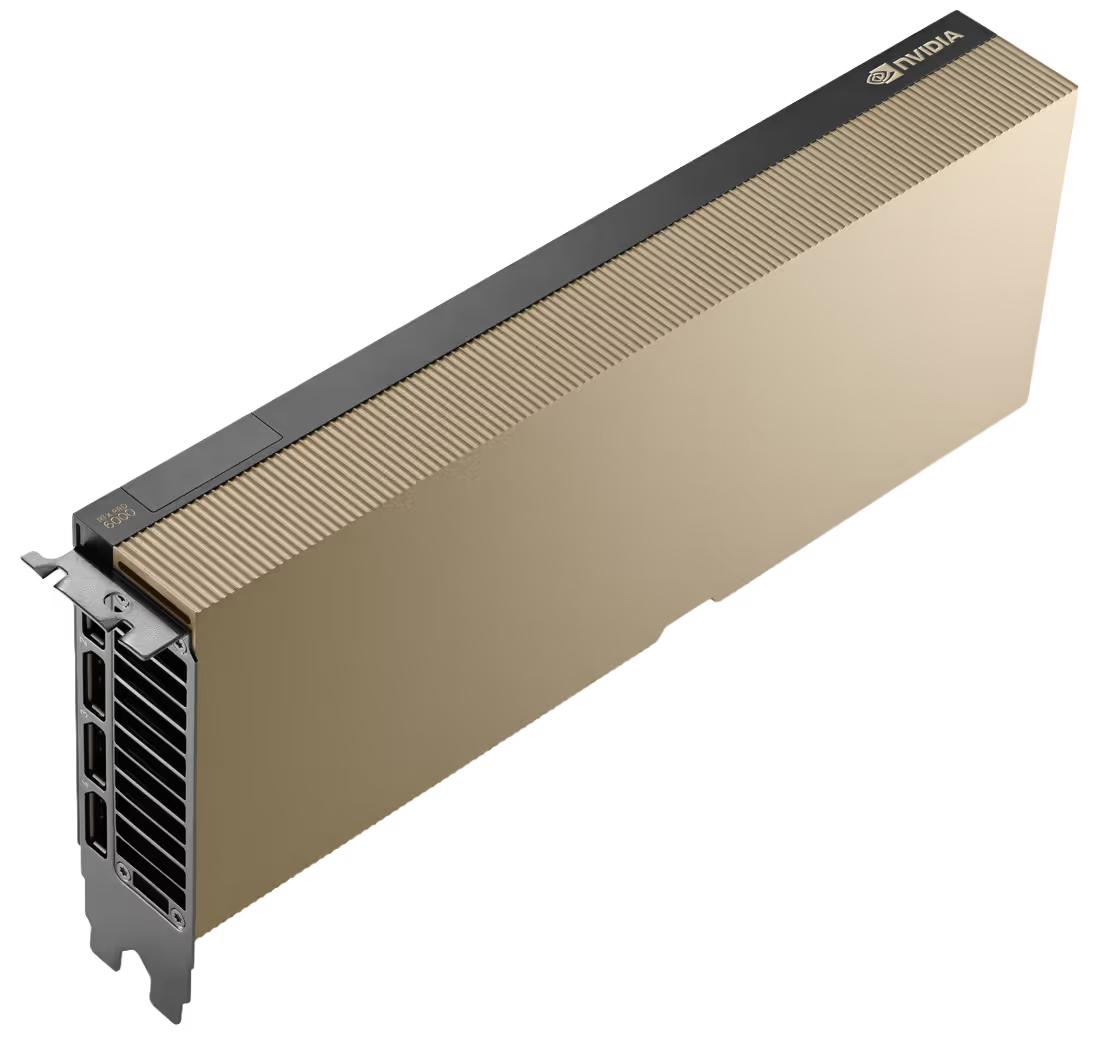

RTX PRO 6000 Blackwell

Flexibly configurable

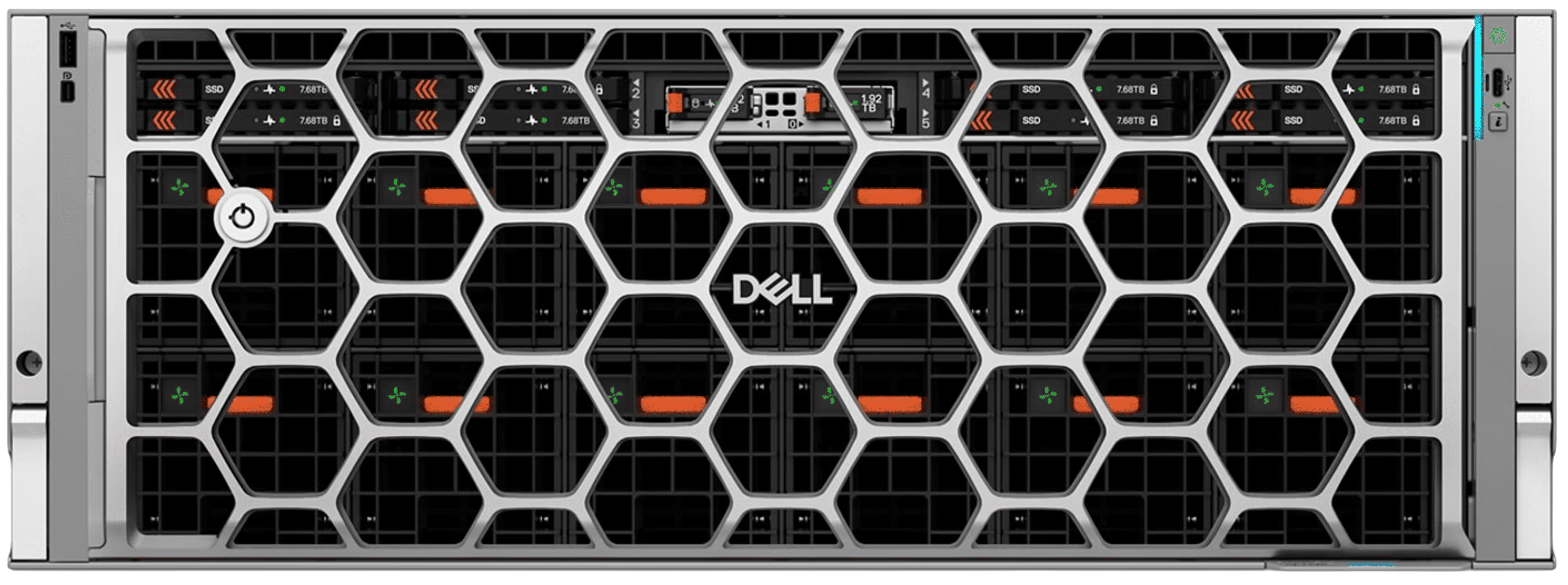

Flexibly configurable Dell PowerEdge R7725

Custom GPU Infrastructure

INGATE delivers any Dell PowerEdge AI server — individually configured with any currently available GPU on the market. Whether NVIDIA H100, H200, B200, B300, RTX PRO 6000 Blackwell, or AMD Instinct: We build your system precisely to your requirements.

From a single inference GPU to a multi-node cluster with NVLink — we advise you personally, analyze your workload, and recommend the optimal configuration for maximum performance per euro.

Dell PowerEdge AI Server — All Models Available

We deliver any Dell PowerEdge AI server individually configured to your specifications. Here is an overview of all available model series:

All models individually configurable — with any currently available GPU. Contact us for your custom quote.

Your Advantages at a Glance

GPU infrastructure for AI and ML is complex. At INGATE, we analyze your workload and recommend the optimal GPU configuration. Your data and models remain on sovereign German infrastructure.

GPU Comparison

Dedicated NVIDIA GPUs for every performance tier.

| Model | GPU RAM | Tensor TFLOPS | Tensor Cores | Use Case |

|---|---|---|---|---|

| NVIDIA H100 | 80 GB HBM3 | ~3,958 | 640 (FP8) | LLM Training, HPC |

| NVIDIA RTX PRO 6000 Blackwell | 96 GB GDDR7 | ~3,511 | 680 (FP4) | LLM Training, Multi-GPU |

| NVIDIA L40s | 48 GB GDDR6 | ~1,466 | 568 (FP8) | Training, Inference, VDI |

| NVIDIA RTX 6000 Ada | 48 GB GDDR6 | ~1,322 | 568 (FP8) | Rendering, Training |

| NVIDIA L4 Ada | 24 GB GDDR6 | ~485 | 240 (FP8) | Inference, Video, VDI |

| NVIDIA RTX 4000 SFF Ada | 20 GB GDDR6 | ~307 | 192 | Inference, Rendering |

| NVIDIA A2 | 16 GB GDDR6 | ~36 | 128 | Inference (Entry) |

Additional GPU models available on request.

More Services

Personal Hardware Consulting

Which GPU architecture suits your framework? How much GPU memory do you need? Is multi-GPU worthwhile? We analyze and recommend — for maximum performance per euro.

CUDA Support & Data Sovereignty

CUDA, cuDNN, TensorRT pre-installed on request. Container runtime for GPU workloads. Your training data and models remain on German infrastructure — no US Cloud Act.

Scalable GPU Clusters

From a single GPU to multi-GPU clusters. Direct Connect to the INGATE Cloud for hybrid workloads.

Learn moreNetwork & Connectivity

IPv4 and native IPv6, IP addresses per RIPE. Network cards up to 100G (Intel, Broadcom, NVIDIA ConnectX-6). Direct Connect for minimal latency.

INGATE Premium Support

Support via email and phone, free 24/7 emergency hotline, personal point of contact, and highly qualified on-site personnel.

Managed Option

Every GPU server can optionally be operated as a managed server — with regular system and security updates, GPU monitoring, and framework updates.

Technical Highlights

State-of-the-art infrastructure in our data centers for your business-critical applications.

Redundant Power Supply

Dual-path A/B power supply down to the rack. Dedicated transformers, UPS, and backup generators.

High-Efficiency Cooling

PUE < 1.20 through free cooling and cold aisle containment. Optimized for high-density up to 20 kW per rack.

Fire Protection

VESDA early detection and damage-free gas extinguishing system.

High-Speed Backbone

Redundant high-performance backbone with multiple 100Gbit/s links. Direct peering at DE-CIX and MuCon-X for lowest latencies.

Physical Security

Security level SK4. Biometric access control and comprehensive video surveillance.

Sustainability

Carbon-neutral operations with 100% green energy. Certified green electricity and waste heat recovery.

Certified Data Centers

Our primary data center EMC Home of Data in Munich holds the following certifications. All additional data centers are at least ISO 27001 certified and powered by 100% renewable energy. Select locations additionally hold SOC 1, SOC 2, and PCI-DSS certifications.

Frequently Asked Questions

Answers to the most important questions about GPU servers.

Which GPU is right for my AI project?

Are multi-GPU setups possible?

What software is pre-installed?

Does my training data stay in Germany?

Which operating systems are supported?

Is a different hardware configuration possible?

What is inference and how does it differ from training?

What are the differences between GPUs for rendering and AI workloads?

Technology Partners & Memberships